Use Cases & Upskilling

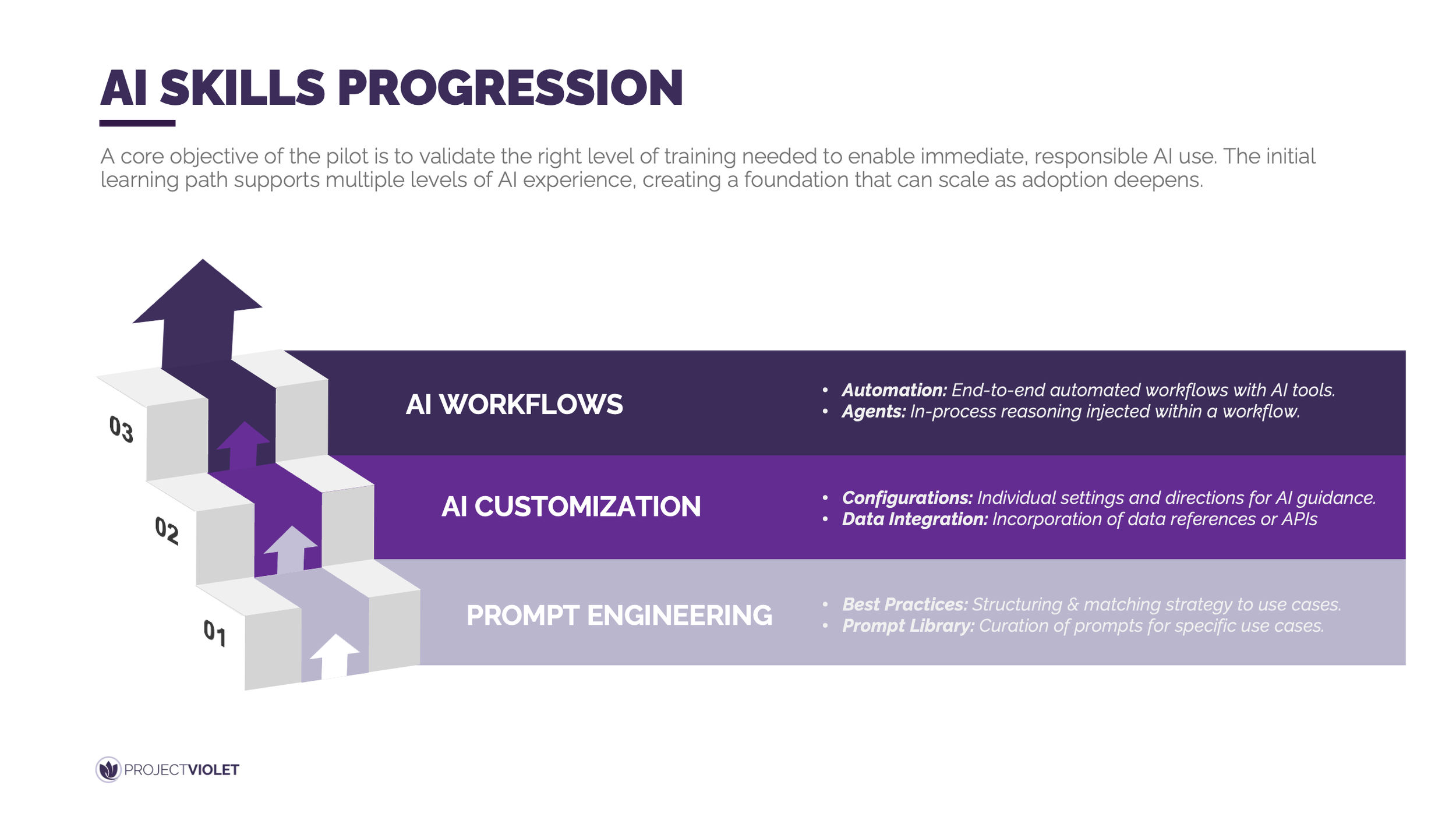

A core objective of the pilot is to validate the right level of training needed to enable immediate, responsible AI use. The initial learning path supports multiple levels of AI experience and is designed to scale as adoption deepens.

AI adoption accelerates when teams progress deliberately from prompt engineering to customization and then into full workflows, rather than attempting advanced automation too early. Foundational prompt engineering builds confidence and consistency by teaching people how to structure requests and reuse proven patterns. Customization introduces stronger guidance through configurations and light data integration, allowing AI outputs to align more closely with role-specific needs. Workflow development then unlocks the highest value by connecting multiple steps, tools, and decisions into repeatable, end-to-end processes that meaningfully change how work gets done.

In practice, this progression allows teams to move at different speeds without losing alignment. Beginners can deliver immediate value through better prompting, while more advanced users focus on customized assistants and targeted automations. This layered approach sets the stage for identifying the people and roles needed to sustain and scale AI usage across the organization.

AI Pilot Use Cases

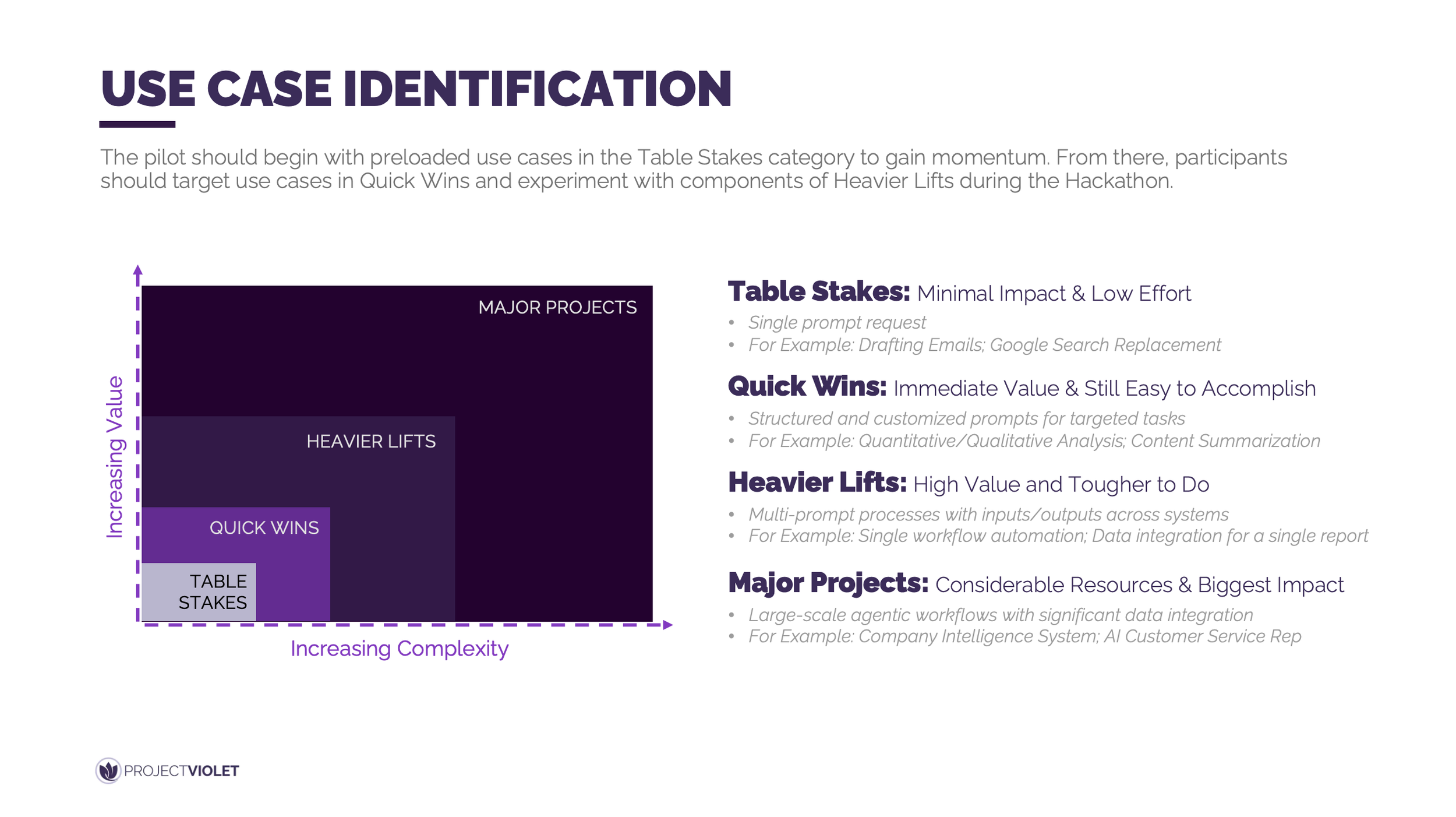

The pilot should begin with preloaded use cases in the Table Stakes category to build confidence and momentum quickly. From there, participants can move into Quick Wins and selectively experiment with elements of Heavier Lifts as skills mature.

A structured progression from low-effort to higher-complexity use cases allows teams to realize value early while building the muscle needed for more advanced AI workflows. Table Stakes focus on simple, single-prompt tasks that normalize AI usage in daily work. Quick Wins introduce more structured prompts that deliver immediate business value without heavy technical lift. Heavier Lifts require multi-step workflows and coordination across tools or systems, and while they are not the primary focus of the pilot, targeted experimentation helps participants understand what is possible and what it takes to scale.

In practice, this means curating a short list of starter use cases that everyone can execute in week one, then encouraging teams to adapt and extend them into Quick Wins that matter for their role or function. Hackathon-style exploration can be used to safely test pieces of more complex workflows without committing to full-scale builds. This sequencing creates a natural bridge into the next phase, where use cases are broken down into workflows and assessed for scalability and enterprise readiness.

Sample Starter Use Cases: Have well crafted prompts ready to share for your initial set of starter use cases.

Recap a Meeting: Use AI to summarize a meeting transcript, highlighting key decisions, discussion themes, and action items.

Summarize an Email Chain: Have AI condense long email threads into a clear summary with relevant context and next steps.

Draft an Email: Teach AI your personal or organizational tone and generate a first draft for internal communications.

Writing Support: Leverage AI to build outlines and initial drafts for presentations, documents, or reports.

Brainstorm and Ideation: Engage AI as a thinking partner to generate ideas, pressure-test assumptions, and refine strategies.

Work Breakdown and AI Workflow Preparation

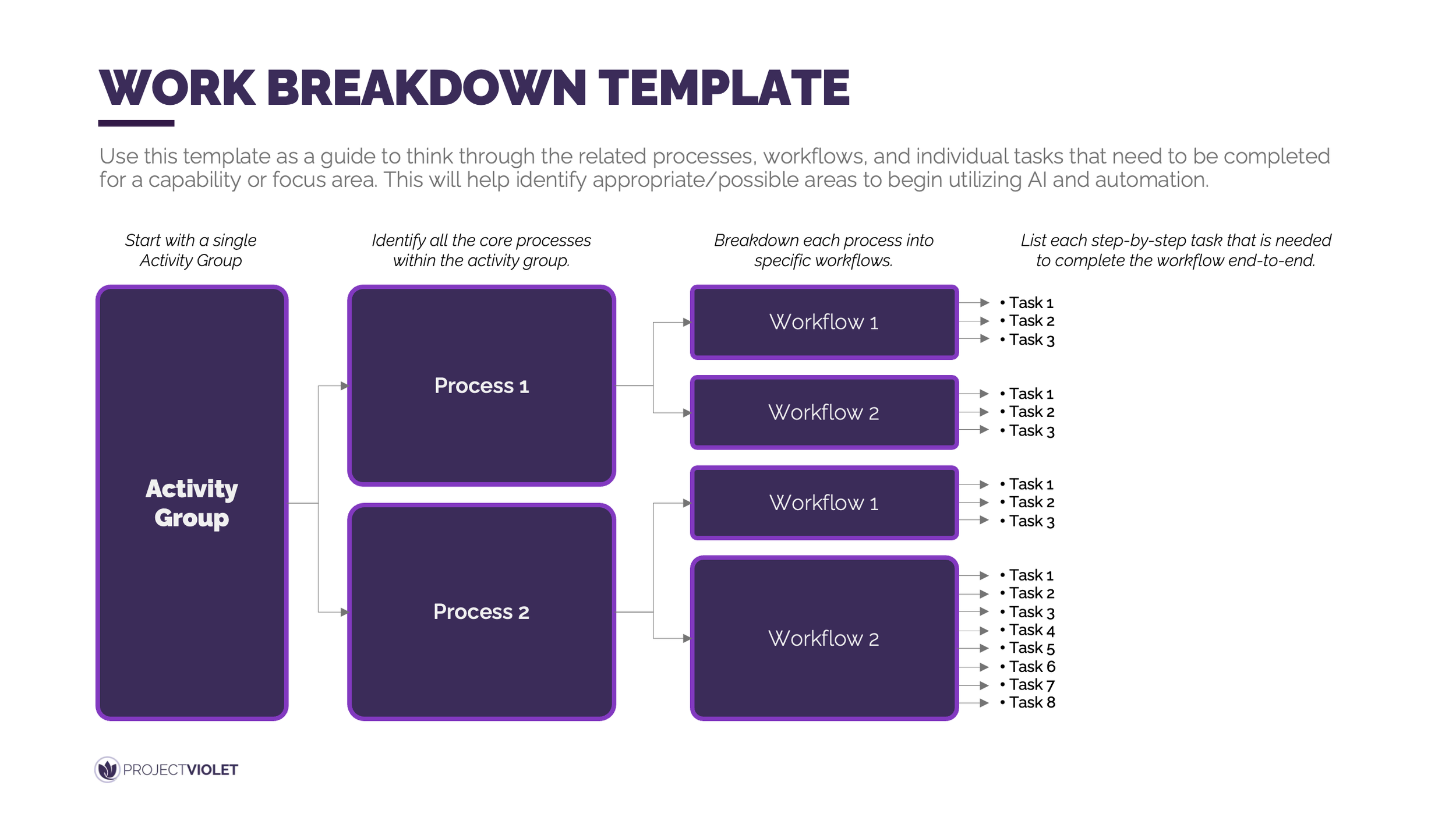

Effective AI adoption starts with a clear understanding of how work actually gets done today. This template provides a structured way for participants to break down their day-to-day activities before attempting to apply AI.

Most AI efforts stall not because of tooling limitations, but because teams skip the discipline of decomposing work into processes, workflows, and step-by-step tasks. This preparation phase requires participants to slow down and explicitly map what happens at each stage of a workflow, including required inputs, handoffs, decisions, and desired outcomes. By starting with a single activity group and progressively breaking it into processes, workflows, and tasks, participants can surface where work is repetitive, where judgment is required, and where AI could realistically add value. This clarity reduces guesswork and prevents teams from forcing AI into poorly understood or unsuitable parts of their work.

In practice, this means treating workflow breakdown as intentional prework rather than overhead. Once tasks, inputs, and outputs are clearly defined, teams are far better positioned to design AI-assisted steps and connect components into coherent workflows. This groundwork sets up the next phase, where participants can confidently experiment with building and combining AI components, including during hackathon-style sessions, with far more productive and meaningful results.

Return to the table of contents or use the navigation below to continue.

Looking for more support?

Leadership Lab: Learn alongside other leaders as you apply AI in real workflows, share lessons learned, and build the leadership skills needed to guide teams through change. Explore Leadership Lab

AI Pilot Program: Partner with us to design and launch a practical AI pilot program tailored to your organization. Learn More